Abstract

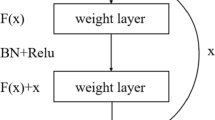

A PGGAN-based iris image restoration framework for autonomously restoring obscured iris information regions in iris images is proposed in the paper. First, to stabilize the training, the paper introduces the fade-in operation in the training phase of resolution doubling, so that the resolution increase can be smoothly transitioned. Simultaneously, the deconv network is removed, and conv + upsample is used instead, so that the generated model avoids the checkerboard effect. Second, the paper uses white squares to mask the real image to mimic the iris image with light spots in real scenes and obtains the restored image by network restoration. Finally, we use the restored image and the incomplete image as two input classes of the same recognition network, which proves the true validity of the restored image. The results of extensive comparative experiments on publicly available IITD datasets show that the proposed restoration framework is feasible and realistic.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Chen, Y., et al.: An adaptive CNNs technology for robust iris segmentation. IEEE Access 7, 64517–64532 (2019)

Ballester, C., et al.: Filling-in by joint interpolation of vector fields and gray levels. IEEE Trans. Image Process. 10(8), 1200–1211 (2001)

Bertalmio, M., Bertozzi, A.L., Sapiro, G.: Navier-stokes, fluid dynamics, and image and video inpainting. In: Proceedings of the 2001 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, CVPR 2001. IEEE, vol. 1, pp. I–I (2001)

Chan, R.H., Wen, Y.W., Yip, A.M.: A fast optimization transfer algorithm for image inpainting in wavelet domains. IEEE Trans. Image Process. 18(7), 1467–1476 (2009)

Zhang, Y., et al.: A class of fractional-order variational image inpainting models. Appl. Math. Inf. Sci. 6(2), 299–306 (2012)

Pathak, D., et al.: Context encoders: Feature learning by inpainting. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (2016)

Goodfellow, I.J., Pouget-Abadie, J., Mirza, M., et al.: Generative adversarial networks. arXiv preprint arXiv:1406.2661 (2014)

Zhao, J.B., Mathieu, M., Goroshin, R., et al.: Stacked what where auto-encoders. arXiv:1506.02351 (2016)

Yeh, R.A., et al.: Semantic image inpainting with deep generative models. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (2017)

Yu, J., Lin, Z., Yang, J., Shen, X., Lu, X., Huang, T.S.: Generative image inpainting with contextual attention. arXiv preprint (2018)

Liu, J., Jung, C.: Facial image inpainting using multi-level generative network. In: 2019 IEEE International Conference on Multimedia and Expo (ICME). IEEE, pp. 1168–1173.s (2019)

Yang, C., et al.: High-resolution image inpainting using multi-scale neural patch synthesis. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (2017)

Chen, Y., Liu, Z.S.: Research on iris data enhancement method based on GAN. Inf. Commun. 214(10), 36–40 (2020)

Karras, T., Aila, T., Laine, S., et al.: Progressive growing of GANs for improved quality, stability, and variation. arXiv preprint arXiv:1710.10196 (2017)

Wang, Z., Bovik, A.C., Sheikh, H.R., Simoncelli, E.P.: Image quality assessment: from error visibility to structural similarity. Trans. Imgage. Proc. 13(4), 600–612 (2004)

IITD iris database. http://www.comp.polyu.edu.hk/~csajaykr/IITD/Database_Iris.html

Hofbauer, H., et al.: A ground truth for iris segmentation. In: 2014 22nd International Conference on Pattern Recognition. IEEE (2014)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: ImageNet classification with deep convolutional neural networks. In: NIPS, pp. 1106–1114 (2012)

Acknowledgments

This work is supported by the National Natural Science Foundation of China under Grants 61762067.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Zeng, Y., Chen, Y., Gan, H., Zeng, Z. (2021). Incomplete Texture Repair of Iris Based on Generative Adversarial Networks. In: Feng, J., Zhang, J., Liu, M., Fang, Y. (eds) Biometric Recognition. CCBR 2021. Lecture Notes in Computer Science(), vol 12878. Springer, Cham. https://doi.org/10.1007/978-3-030-86608-2_37

Download citation

DOI: https://doi.org/10.1007/978-3-030-86608-2_37

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-86607-5

Online ISBN: 978-3-030-86608-2

eBook Packages: Computer ScienceComputer Science (R0)