Abstract

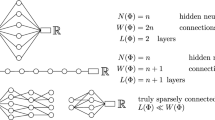

We provide a constructive proof of the theorem of function approximation by perceptrons (cf Leshno et al. [1], Hornik [2]) when the activation function ψ isC∞ with all its derivatives non 0 at 0. We deal with uniform approximation on compact sets of continuous functions on ℜd,d≥1. This approach is elementary and provides some approximation results for the derivatives along with some bounds for the hidden layer.

Similar content being viewed by others

References

M. Leshno, V. Ya. Lin, A. Pinkus, S. Schocken. Multilayer feedforward networks with non-polynomial activation function can approximate any function,Neural Networks, vol. 6, pp. 861–867, 1993.

K. Hornik. Some new results on neural network approximation,Neural Networks, vol. 6, pp. 1069–1072, 1993.

Y. Ito, Approximation of functions on a compact set by finite sums of a sigmoid function without scaling,Neural Networks, vol. 4, pp. 817–826, 1991.

G.G. Lorentz,Approximation of Functions, Chelsea Publishing Company, New-York, 188p., 1966.

G.G. Lorentz,Berstein Polynomials, Chelsea Publishing Company, New-York, 134p., 1986.

A.R. Barron, Universal approximation bounds for superpositions of a sigmoidal function,Information Theory, vol. 39, no. 3, pp. 930–945, 1993.

K. Hornik. Degree of approximation results for feedforward networks approximating unknown mappings and their derivatives,Neural Computation, vol. 6, pp. 1262–1275, 1994.

J.G. Attali, G. Pagés. Approximation of functions by perceptrons: a new approach, preprint of SAMOS (Paris, France).

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Attali, JG., Pagès, G. Approximation of functions by perceptrons: a new approach. Neural Process Lett 2, 19–22 (1995). https://doi.org/10.1007/BF02332161

Issue Date:

DOI: https://doi.org/10.1007/BF02332161