Abstract

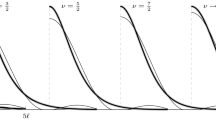

This paper is mainly devoted to a precise analysis of what kind of penalties should be used in order to perform model selection via the minimization of a penalized least-squares type criterion within some general Gaussian framework including the classical ones. As compared to our previous paper on this topic (Birgé and Massart in J. Eur. Math. Soc. 3, 203–268 (2001)), more elaborate forms of the penalties are given which are shown to be, in some sense, optimal. We indeed provide more precise upper bounds for the risk of the penalized estimators and lower bounds for the penalty terms, showing that the use of smaller penalties may lead to disastrous results. These lower bounds may also be used to design a practical strategy that allows to estimate the penalty from the data when the amount of noise is unknown. We provide an illustration of the method for the problem of estimating a piecewise constant signal in Gaussian noise when neither the number, nor the location of the change points are known.

Article PDF

Similar content being viewed by others

References

Abramovich, F., Benjamini, Y., Donoho, D.L., Johnstone, I.M.: Adapting to unknown sparsity by controlling the false discovery rate. Ann. Statist. 34, (2006)

Akaike H. (1969). Statistical predictor identification. Ann. Inst. Statist. Math. 22:203–217

Akaike H. (1973). Information theory and an extension of the maximum likelihood principle. In: Petrov P.N., Csaki F. (eds) Proceedings 2nd International Symposium on Information Theory. Akademia Kiado, Budapest, pp. 267–281

Akaike H. (1974). A new look at the statistical model identification. IEEE Trans. Autom. Control 19:716–723

Akaike H. A Bayesian analysis of the minimum AIC procedure. Ann. Inst. Statist. Math. 30, Part A, 9–14 (1978)

Amemiya T. (1985). Advanced Econometrics. Basil Blackwell, Oxford

Barron A.R., Birgé L., Massart P. (1999). Risk bounds for model selection via penalization. Probab. Theory Relat. Fields 113:301–415

Barron A.R., Cover T.M. (1991). Minimum complexity density estimation. IEEE Trans. Inf. Theory 37:1034–1054

Birgé, L.: An alternative point of view on Lepski’s method. In: de Gunst, M.C.M., Klaassen, C.A.J., van der Vaart, A.W. (eds.) State of the Art in Probability and Statistics, Festschrift for Willem R. van Zwet, Institute of Mathematical Statistics, Lecture Notes–Monograph Series, Vol. 36. 113–133 (2001)

Birgé L., Massart P. (1998). Minimum contrast estimators on sieves: exponential bounds and rates of convergence. Bernoulli 4:329–375

Birgé L., Massart P. (2001). Gaussian model selection. J. Eur. Math. Soc. 3:203–268

Birgé, L., Massart, P.: A generalized C p criterion for Gaussian model selection. Technical Report No 647. Laboratoire de Probabilités, Université Paris VI (2001) http://www.proba. jussieu.fr/mathdoc/preprints/index.html#2001

Daniel C., Wood F.S. (1971). Fitting Equations to Data. Wiley, New York

Draper N.R., Smith H. (1981). Applied Regression Analysis, 2nd edn. Wiley, New York

Efron B., Hastie R., Johnstone I.M., Tibshirani R. (2004). Least angle regression. Ann. Statist. 32:407–499

Feller W. (1968). An Introduction to Probability Theory and its Applications, Vol I (3rd edn). Wiley, New York

George E.I., Foster D.P. (2000). Calibration and empirical Bayes variable selection. Biometrika 87:731–747

Gey S., Nédélec E. (2005). Model selection for CART regression trees. IEEE Trans. Inf. Theory 51:658–670

Guyon X., Yao J.F. (1999). On the underfitting and overfitting sets of models chosen by order selection criteria. Jour. Multivar. Anal. 70:221–249

Hannan E.J., Quinn B.G. (1979). The determination of the order of an autoregression. J.R.S.S., B 41:190–195

Hoeffding W. (1963). Probability inequalities for sums of bounded random variables. J.A.S.A. 58:13–30

Hurvich K.L., Tsai C.-L. (1989). Regression and time series model selection in small samples. Biometrika 76:297–307

Johnstone, I.: Chi-square oracle inequalities. In: de Gunst, M.C.M., Klaassen, C.A.J. van der Vaart, A.W. (eds.) State of the Art in Probability and Statistics, Festschrift for Willem R. van Zwet, Institute of Mathematical Statistics, Lecture Notes–Monograph Series, Vol. 36. pp. 399–418 (2001)

Kneip A. (1994). Ordered linear smoothers. Ann. Statist. 22:835–866

Lavielle M., Moulines E. (2000). Least Squares estimation of an unknown number of shifts in a time series. J. Time Series Anal. 21:33–59

Lebarbier E. (2005). Detecting multiple change-points in the mean of a Gaussian process by model selection. Signal Proces. 85:717–736

Li K.C. (1987). Asymptotic optimality for C p , C L , cross-validation, and generalized cross-validation: Discrete index set. Ann. Statist. 15:958–975

Loubes, J.-M., Massart, P.: Discussion of “Least angle regression” by Efron, B., Hastie, R., Johnstone, I., Tibshirani, R. Ann. Statist. 32, 460–465 (2004).

Mallows C.L. (1973). Some comments on C p . Technometrics 15:661–675

Massart P. (1990). The tight constant in the D.K.W. inequality. Ann. Probab. 18:1269–1283

McQuarrie A.D.R., Tsai C.-L. (1998). Regression and Time Series Model Selection. World Scientific, Singapore

Mitchell T.J., Beauchamp J.J. (1988). Bayesian variable selection in linear regression. J.A.S.A. 83:1023–1032

Polyak B.T., Tsybakov A.B. (1990). Asymptotic optimality of the C p -test for the orthogonal series estimation of regression. Theory Probab. Appl. 35:293–306

Rissanen J. (1978). Modeling by shortest data description. Automatica 14:465–471

Schwarz G. (1978). Estimating the dimension of a model. Ann. Statist. 6:461–464

Shen X., Ye J. (2002). Adaptive model selection. J.A.S.A. 97:210–221

Shibata R. (1981). An optimal selection of regression variables. Biometrika 68:45–54

Wallace D.L. (1959). Bounds on normal approximations to Student’s and the chi-square distributions. Ann. Math. Stat. 30:1121–1130

Whittaker E.T., Watson G.N. (1927). A Course of Modern Analysis. Cambridge University Press, London

Yang Y. (2005). Can the strenghths of AIC and BIC be shared? A conflict between model identification and regression estimation. Biometrika 92:937–950

Yao Y.C. (1988). Estimating the number of change points via Schwarz criterion. Stat. Probab. Lett. 6:181–189

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Birgé, L., Massart, P. Minimal Penalties for Gaussian Model Selection. Probab. Theory Relat. Fields 138, 33–73 (2007). https://doi.org/10.1007/s00440-006-0011-8

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00440-006-0011-8