Abstract

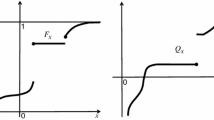

A theorem by Wilks asserts that in smooth parametric density estimation the difference between the maximum likelihood and the likelihood of the sampling distribution converges toward a Chi-square distribution where the number of degrees of freedom coincides with the model dimension. This observation is at the core of some goodness-of-fit testing procedures and of some classical model selection methods. This paper describes a non-asymptotic version of the Wilks phenomenon in bounded contrast optimization procedures. Using concentration inequalities for general functions of independent random variables, it proves that in bounded contrast minimization (as for example in Statistical Learning Theory), the difference between the empirical risk of the minimizer of the true risk in the model and the minimum of the empirical risk (the excess empirical risk) satisfies a Bernstein-like inequality where the variance term reflects the dimension of the model and the scale term reflects the noise conditions. From a mathematical statistics viewpoint, the significance of this result comes from the recent observation that when using model selection via penalization, the excess empirical risk represents a minimum penalty if non-asymptotic guarantees concerning prediction error are to be provided. From the perspective of empirical process theory, this paper describes a concentration inequality for the supremum of a bounded non-centered (actually non-positive) empirical process. Combining the now classical analysis of M-estimation (building on Talagrand’s inequality for suprema of empirical processes) and versatile moment inequalities for functions of independent random variables, this paper develops a genuine Bernstein-like inequality that seems beyond the reach of traditional tools.

Article PDF

Similar content being viewed by others

References

Akaike H.: A new look at the statistical model identification. IEEE Trans. Automat. Contr. 19(6), 716–723 (1974)

Alexander K.: Rates of growth and sample moduli for weighted empirical processes indexed by sets. Probab. Theory Relat. Fields 75, 379–423 (1987)

Angluin D., Laird P.: Learning from noisy examples. Mach. Learn. 2(4), 343–370 (1987)

Arlot S., Massart P.: Data-driven calibration of penalties for least-squares regression. J. Mach. Learn. Res. 10, 245–279 (2009)

Assouad P.: Densité et dimension. Ann. Inst. Fourier (Grenoble) 33(3), 233–282 (1983)

Bartlett P., Mendelson S.: Empirical minimization. Probab. Theory Relat. Fields 135(3), 311–334 (2006)

Bartlett P., Boucheron S., Lugosi G.: Model selection and error estimation. Mach. Learn. 48, 85–113 (2002)

Bickel P., Doksum K.: Mathematical Statistics. Holden-Day Inc., San Francisco (1976)

Boucheron S., Bousquet O., Lugosi G.: Theory of classification: some recent advances. ESAIM Probab. Stat. 9, 329–375 (2005)

Boucheron S., Bousquet O., Lugosi G., Massart P.: Moment inequalities for functions of independent random variables. Ann. Probab. 33(2), 514–560 (2005)

Bousquet, O.: Concentration inequalities for sub-additive functions using the entropy method. In: Stochastic Inequalities and Applications. Progress in Probability, vol. 56, pp. 213–247. Birkhäuser, Basel (2003)

Bousquet O.: A Bennett concentration inequality and its application to suprema of empirical processes. C. R. Math. Acad. Sci. Paris 334(6), 495–500 (2002)

de la Pena V., Giné E.: Decoupling. Springer, Berlin (1999)

Devroye L., Wagner T.: Distribution-free inequalities for the deleted and holdout error estimates. IEEE Trans. Inform. Theory 25, 202–207 (1977)

Efron B., Stein C.: The jackknife estimate of variance. Ann. Stat. 9(3), 586–596 (1981)

Fan J.: Local linear regression smoothers and their minimax efficiency. Ann. Stat. 21, 196–216 (1993)

Fan J., Zhang C., Zhang J.: Generalized likelihood ratio statistics and Wilks phenomenon. Ann. Stat. 29(1), 153–193 (2001)

Gayraud G., Pouet C.: Minimax testing composite null hypotheses in the discrete regression scheme. Math. Methods Stat. 10(4), 375–394 (2001)

Giné E., Koltchinskii V.: Concentration inequalities and asymptotic results for ratio type empirical processes. Ann. Probab. 34(3), 1143–1216 (2006)

Giné, E., Koltchinskii, V., Wellner, J.: Stochastic inequalities and applications. In: Ratio Limit Theorems for Empirical Processes, pp. 249–278. Birkhaüser, Basel (2003)

Huber, P.: The behavior of the maximum likelihood estimates under non-standard conditions. In: Proceedings of Fifth Berkeley Symposium on Probability and Mathematical Statistics, pp. 221–233. University of California Press, Berkeley (1967)

Kearns M., Ron D.: Algorithmic stability and sanity-check bounds for leave-one-out cross-validation. Neural Comput. 11(6), 1427–1453 (1999)

Kearns M., Mansour Y., Ng A., Ron D.: An experimental and theoretical comparison of model selection methods. Mach. Learn. 27, 7–50 (1997)

Koltchinskii V.: Localized rademacher complexities and oracle inequalities in risk minimization. Ann. Stat. 34, 2593–2656 (2006)

Ledoux, M.: On Talagrand’s deviation inequalities for product measures. ESAIM Probab. Stat. 1, 63–87 (1995/1997)

Ledoux M.: The concentration of measure phenomenon. American Mathematical Society, Providence (2001)

Ledoux M., Talagrand M.: Probability in Banach spaces. Springer, Berlin (1991)

Mallows C.: Some comments on C p . Technometrics 15(4), 661–675 (1973)

Mammen E., Tsybakov A.: Smooth discrimination analysis. Ann. Stat. 27(6), 1808–1829 (1999)

Massart P.: About the constants in Talagrand’s concentration inequality. Ann. Probab. 28, 863–885 (2000)

Massart P.: Some applications of concentration inequalities to statistics. Ann. Fac. Sci. Toulouse IX(2), 245–303 (2000)

Massart, P.: Concentration inequalities and model selection. Ecole d’Eté de Probabilité de Saint-Flour xxxiv. In: Lecture Notes in Mathematics, vol. 1896. Springer, Berlin (2007)

Massart P., Nedelec E.: Risk bounds for classification. Ann. Stat. 34(5), 2326–2366 (2006)

Pollard D.: Convergence of Stochastic Processes. Springer, Berlin (1984)

Portnoy S.: Asymptotic behavior of likelihood methods for exponential families when the number of parameters tends to infinity. Ann. Stat. 16, 356–366 (1988)

Quenouille M.: Approximate test of correlation in time series. J. R. Stat. Soc. Ser. B 11, 68–84 (1949)

Rakhlin A., Mukherjee S., Poggio T.: Stability results in learning theory. Anal. Appl. (Singapore) 3, 397–417 (2005)

Rio E.: Inégalités de concentration pour les processus empiriques de classes de parties. Probab. Theory Relat. Fields 119, 163–175 (2001)

Schoelkopf B., Smola A.: Learning with Kernels. MIT Press, Cambridge (2002)

Shorack G.R., Wellner J.A.: Empirical Processes with Applications to Statistics. Wiley, New York (1986)

Talagrand M.: A new look at independence. Ann. Probab. 24, 1–34 (1996)

Talagrand M.: New concentration inequalities in product spaces. Invent. Math. 126, 505–563 (1996)

Tsybakov A.B.: Optimal aggregation of classifiers in statistical learning. Ann. Stat. 32, 135–166 (2004)

Tukey J.: Bias and confidence in not quite large samples. Ann. Math. Stat. 29, 614 (1958)

van de Geer S.: Applications of Empirical Process Theory. Cambridge University Press, London (2000)

van der Vaart A.: Asymptotic Statistics. Cambridge University Press, London (1998)

van der Vaart A., Wellner J.: Weak Convergence and Empirical Processes. Springer, Berlin (1996)

Vapnik V.: The Nature of Statistical Learning Theory. Springer, Berlin (1995)

Wilks S.: The large-sample distribution of the likelihood ratio for testing composite hypotheses. Ann. Math. Stat. 9, 60–62 (1938)

Author information

Authors and Affiliations

Corresponding author

Additional information

This work was supported by ANR Grant TAMIS and Network of Excellence PASCAL II.

Rights and permissions

About this article

Cite this article

Boucheron, S., Massart, P. A high-dimensional Wilks phenomenon. Probab. Theory Relat. Fields 150, 405–433 (2011). https://doi.org/10.1007/s00440-010-0278-7

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00440-010-0278-7

Keywords

- Wilks phenomenon

- Risk estimates

- Suprema of empirical processes

- Concentration inequalities

- Statistical learning