Abstract

In the present study, emotion recognition from speech signals was performed by using the fuzzy C-means algorithm. Spectral features obtained from speech signals were used as features. The spectral features used were Mel frequency cepstral coefficients and linear prediction coefficients. Certain statistical features were extracted from the spectral features obtained in the study. After the selection of the extracted features, cluster centers were identified by using type-1 fuzzy C-means (FCM) algorithm and used as input to the classifier. Supervised classifiers such as ANN, NB, kNN, and SVM were used for classification. In the study, all seven emotions of the EmoDB database were used. Of the features obtained, FCM clustering was applied to Mel coefficients and obtained clusters centers were used as input for classification. The results showed that using FCM for preprocessing aim increased the success rate. The comparison of the classification methods showed that the maximum success rate was obtained as 92.86% using the SVM classifier.

Similar content being viewed by others

References

France DJ, Shiavi RG (2000) Acoustical properties of speech as indicators of depression and suicidal risk. IEEE Trans Biomed Eng 47:829–837. doi:10.1109/10.846676

Ma J, Jin H, Yang LT, Tsai JJ-P (2006) Ubiquitous intelligence and computing: third international conference, UIC 2006, Wuhan, China, September 3–6 proceedings (LNCS). Springer, Secaucus

Nasukawa T, Nasukawa T, Yi J, Yi J (2003) Sentiment analysis: capturing favorability using natural language processing. In: Proceedings of the 2nd international conference on knowledge capture, pp 70–77. doi:10.1145/945645.945658

Sönmez E, Aalbayrak S (2016) A facial component-based system for emotion classification. Turkish J Electr Eng Comput Sci 24:1663–1673

Peters G, Weber R (2016) DCC—a framework for dynamic granular clustering. Granul Comput. doi:10.1007/s41066-015-0012-z

Yao Y (2016) A triarchic theory of granular computing. Granul Comput 1:145–157. doi:10.1007/s41066-015-0011-0

Zhao X, Zhang S (2015) Spoken emotion recognition via locality-constrained kernel sparse representation. Neural Comput Appl 26(3):735–744

Sun Y, Wen G, Wang J (2015) Weighted spectral features based on local Hu moments for speech emotion recognition. Biomed Signal Process Control 18:80–90. doi:10.1016/j.bspc.2014.10.008

Karimi S, Sedaaghi MH (2016) How to categorize emotional speech signals with respect to the speaker’s degree of emotional intensity. Turkish J Electr Eng Comput Sci 24:1306–1324. doi:10.3906/elk-1312-196

Cheng B (2011) Emotion recognition from physiological signals using AdaBoost. Commun Comput Inf Sci 224 CCIS:412–417. doi:10.1007/978-3-642-23214-5_54

Min F, Xu J (2016) Semi-greedy heuristics for feature selection with test cost constraints. Granul Comput 1:199–211. doi:10.1007/s41066-016-0017-2

Eyben F, Wöllmer M, Schuller B (2010) Opensmile: the munich versatile and fast open-source audio feature extractor. Proc ACM Multimed. doi:10.1145/1873951.1874246

Milton A, Selvi ST (2014) Class-specific multiple classifiers scheme to recognize emotions from speech signals. Comput Speech Lang 28:727–742. doi:10.1016/j.csl.2013.08.004

Nwe TL, Foo SW, De Silva LC (2003) Speech emotion recognition using hidden Markov models. Speech Commun 41:603–623. doi:10.1016/S0167-6393(03)00099-2

Hanilçi C (2007) A comparative study of speaker recognition techniques, MSc, Uludag University, Bursa

Albornoz EM, Milone DH, Rufiner HL (2011) Spoken emotion recognition using hierarchical classifiers. Comput Speech Lang 25:556–570. doi:10.1016/j.csl.2010.10.001

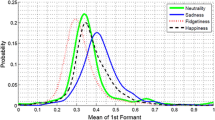

Bozkurt E, Erzin E, Erdem ÇE, Erdem AT (2011) Formant position based weighted spectral features for emotion recognition. Speech Commun 53:1186–1197. doi:10.1016/j.specom.2011.04.003

Song M, Wang Y (2016) A study of granular computing in the agenda of growth of artificial neural networks. Granul Comput. doi:10.1007/s41066-016-0020-7

Lingras P, Haider F, Triff M (2016) Granular meta-clustering based on hierarchical, network, and temporal connections. Granul Comput 1:71–92. doi:10.1007/s41066-015-0007-9

El Ayadi M, Kamel MS, Karray F (2011) Survey on speech emotion recognition: features, classification schemes, and databases. Pattern Recognit 44:572–587. doi:10.1016/j.patcog.2010.09.020

Kotropoulos C (2003) A state of the art review on emotional speech databases. In: 1st Richmedia conference, pp 109–119

Burkhardt F, Paeschke A, Rolfes M et al (2005) A database of German emotional speech. In: 9th European conference on speech communication and technology, pp 3–6

Becchetti C, Ricotti LP (2004) Speech recognition: theory an C++ implementation, 3rd edn. Wiley, New York, pp 125–135

Dunn JC (1973) A fuzzy relative of the ISODATA process and its use in detecting compact well-separated clusters. J Cybern 3:32–57

Bezdek JC (1981) Pattern recognition with fuzzy objective function algorithms. Plenum Press, New York, p 4

Bezdek JC (1983) Pattern recognition with fuzzy objective function algorithms. SIAM Rev 25:442. doi:10.1137/1025116

Bezdek JC, Ehrlich R, Full W (1984) FCM: the fuzzy C-means clustering algorithm. Comput Geosci 10(2–3):191–203

http://home.deib.polimi.it/matteucc/Clustering/tutorial_html/cmeans.html. Access: 30 Sept 2016

Anderson D, Mcneill G (1992) Artificial neural networks technology. Kaman Sciences Corporation, Utica, New York

Baluja S (1995) Artificial neural network evolution: learning to steer a land vehicle. CRC Press Inc

Mitchell TM (1997) Machine learning. McGraw-Hill, Inc., New York

Ververidis D, Kotropoulos C (2006) Emotional speech recognition: resources, features, and methods. Speech Commun 48:1162–1181. doi:10.1016/j.specom.2006.04.003

Ceylan R, Özbay Y (2007) Comparison of FCM, PCA and WT techniques for classification ECG arrhythmias using artificial neural network. Expert Syst Appl 33:286–295. doi:10.1016/j.eswa.2006.05.014

Chaoui H, Sicard P, Gueaieb W (2009) ANN-based adaptive control of robotic manipulators with friction and joint elasticity. IEEE Trans Ind Electron 56:3174–3187. doi:10.1109/TIE.2009.2024657

Özbay Y, Tezel G (2010) A new method for classification of ECG arrhythmias using neural network with adaptive activation function. Digit Signal Process 20:1040–1049. doi:10.1016/j.dsp.2009.10.016

Oflazoglu C, Yildirim S (2013) Recognizing emotion from Turkish speech using acoustic features. EURASIP J Audio Speech Music Process 2013:26. doi:10.1186/1687-4722-2013-26

Davy M, Gretton A, Doucet A et al (2002) Optimized support vector machines for nonstationary signal classification. Sig Process 9:442–445. doi:10.1109/LSP.2002.806070

Rish I (2001) An empirical study of the naive Bayes classifier. In: Proceedings of IJCAI-01 workshop on Empirical Methods in AI, pp 41–46

Bouckaert RR, Frank E, Hall MA, Holmes G, Pfahringer B, Reutemann P, Witten IH (2010) WEKA-experiences with a java open-source project. J Mach Learn Res 11:2533–2541

Hall M, Frank E, Holmes G, Pfahringer B, Reutemann P, Witten IH (2009) The WEKA data mining software. ACM SIGKDD Explor Newsl 11:10–18

Antonelli M, Ducange P, Lazzerini B, Marcelloni F (2016) Multi-objective evolutionary design of granular rule-based classifiers. Granul Comput 1:37–58. doi:10.1007/s41066-015-0004-z

Wu S, Falk TH, Chan W (2011) Automatic speech emotion recognition using modulation spectral features. Speech Commun 53:768–785. doi:10.1016/j.specom.2010.08.013

Engberg IS, Hansen AV (1996) Documentation of the danish emotional speech database des. Intern AAU report, Cent Pers Kommun, p 22

Acknowledgements

The authors acknowledge the support of this study provided by Selcuk University Scientific Research Projects. The authors also thank TUBITAK for their support of this study.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Demircan, S., Kahramanli, H. Application of fuzzy C-means clustering algorithm to spectral features for emotion classification from speech. Neural Comput & Applic 29, 59–66 (2018). https://doi.org/10.1007/s00521-016-2712-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-016-2712-y