Abstract

In this paper, we show that all nodes can be found optimally for almost all random Erdős–Rényi \(\mathcal G(n,p)\) graphs using continuous-time quantum spatial search procedure. This works for both adjacency and Laplacian matrices, though under different conditions. The first one requires \(p=\omega (\log ^8(n)/n)\), while the second requires \(p\ge (1+\varepsilon )\log (n)/n\), where \(\varepsilon >0\). The proof was made by analyzing the convergence of eigenvectors corresponding to outlying eigenvalues in the \(\Vert \cdot \Vert _\infty \) norm. At the same time for \(p<(1-\varepsilon )\log (n)/n\), the property does not hold for any matrix, due to the connectivity issues. Hence, our derivation concerning Laplacian matrix is tight.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Quantum walk is a topic of great interest in quantum information theory [1,2,3]. Numerous possible applications were already discovered, including quantum spatial search [1, 4], Google algorithm [5,6,7] or quantum transport [8, 9]. Throughout this article, we consider quantum spatial search procedure, which is an example of an algorithm yielding a result up to quadratically faster than its classical counterpart. Since the very first paper describing it was published [1], plenty of new results have appeared in the literature. This includes the noise resistance [10], efficiency analysis [1, 4, 11,12,13], imperfect implementation [14] and difference in implementation [15].

Unfortunately, most of the results concern very specific graph classes like complete graphs [1, 10] or their simplex [14], and binary trees [13]. Due to some kind of ‘symmetry,’ it was not necessary to make analysis for all vertices separately (as, for example, in complete graphs or hypercubes), or at least it could be easily fixed (for example by the level in binary trees). The first big step toward the generalization into a large collection of graphs is the work of Chakraborty et al. [4], where Erdős–Rényi random graph model \(\mathcal G(n,p)\) was analyzed (with n, p standing for the number of vertices and probability of an edge being present, respectively). The authors have proven that for almost all graphs almost all vertices can be found optimally. Since there are already known examples of graphs for which some vertices are searched in \(\Theta (n^{\frac{1}{2}+a})\) time for \(a>0\) [1, 13] (throughout this paper \(O,o,\Omega ,\omega ,\Theta ,\sim \) denote asymptotic relations, see [16]), the result cannot be strengthened into ‘all graphs.’

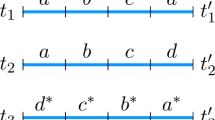

The proof of the main result of Chakraborty et al. [4] is based on a lemma describing limit behavior of a principal eigenvector \(|\lambda _1\rangle \) of the adjacency matrix. The authors show that for \(p>\log ^{\frac{3}{2}}(n)/n\), if \(|s\rangle =\frac{1}{\sqrt{n}}\sum _{v\in V}|v\rangle = \alpha |\lambda _1\rangle +\beta |\lambda _1\rangle ^\bot \), then almost surely \(\alpha = 1-o(1)\). Since the time needed for quantum spatial search is \(\Theta (\frac{1}{|\langle {w}|{\lambda _1}\rangle |})\), where \( w\) denotes the marked vertex, we have that almost all vertices can be found in optimal time. However, in this case it is not trivial which vertex is chosen, since the Erdős–Rényi graph is not necessarily symmetric. This kind of convergence allows the existence of vertices, which can be found in linear time. As an example, consider a vector

for \(k=o(n)\). We have \(\langle {s}|{\lambda _1'}\rangle = \alpha = 1 - o(1)\), and thus a priori vector \(|\lambda _1'\rangle \) from [4], can be the leading eigenvector of an adjacency matrix. In such a case, the argument used by [4] is not tight enough to exclude a possibility that all vertices \(w \in \{1,\dots ,k\}\) will be found in \(\Omega (n)\) time, which is actually a random guess complexity. Note that it is even possible that for almost all graphs, such vertices exist. Furthermore, many of the applications mentioned in [4] require \(\langle {i}|{v_1}\rangle \approx \langle {j}|{v_1}\rangle \) for arbitrary i, j. Otherwise, creating Bell states or quantum transport will be at least very difficult.

What is more, due to the laws of quantum mechanics, the measurement time needs to be known since the beginning. This includes not only differences in the complexity, but a constant as well. For example, if for two different nodes \(v,v'\) we have \(\langle {v}|{\lambda _1}\rangle =\frac{1}{\sqrt{n}}\) and \(\langle {v'}|{\lambda _1}\rangle =\frac{2}{\sqrt{n}}\), then different measurement times should be chosen for each.

Both effects mentioned above can be described as hiding nodes in the graphs. Finally, we propose a following research problem: can we actually ‘hide’ a vertex in a random Erdős–Rényi graph? We have managed to show that in the case of adjacency matrix, \(p=\omega (\log ^3(n)/(n\log ^2(\log (n)))\) is a sufficient requirement for all-vertices optimal search. Under further constraint \(p=\omega (\log ^8(n)/n)\), we have common time measurement. Moreover, we went a step further than the authors of [4] and studied also Laplacian matrix, which led us to tighter results. In the case of Laplacian matrix, \(p>(1+\varepsilon )\log (n)/n\), for constant \(\varepsilon >0\), is sufficient for common time measurement; however in the \(p=\Theta (\log (n)/n)\) case, it may not be true that almost surely the probability 1 of a successful measurement is achieved. If \(p<(1-\varepsilon )\log (n)/n\), then a random graph contains almost surely isolated nodes [17]; hence, it is possible to hide a vertex for both adjacency and Laplacian matrix.

2 Element-wise optimality for adjacency matrix

Let \(G=(V,E)\) be a simple undirected graph with node set \(V=\{1,\dots ,n\}\) and edge set \(E\subset V\times V\). Moreover, let \(\mathcal H_G\) be a quantum system spanned by an orthonormal basis \(\{|v\rangle : v \in V\}\). Quantum spatial search is based on the Schrödinger differential equation

where \(M_\mathrm{G}\) is a matrix corresponding to the graph structure, typically rescaled adjacency matrix A or Laplacian \(L=D-A\), where D is the degree matrix. In [4], authors have proven that for a random Erdős–Rényi graph in case of an adjacency matrix almost all vertices from almost all graphs can be found optimally. We say some property holds almost surely for all graphs, when the probability of choosing random graph having such is \(1-o(1)\). The result was based on the following simplified lemma.

Lemma 1

[4] Let H be a Hamiltonian with eigenvalues \(\lambda _1\ge \dots \ge \lambda _n\) satisfying \(\lambda _1=1\) and \(|\lambda _i|\le c <1\) for all \(i>1\) with corresponding eigenvectors \(|\lambda _1\rangle =|s\rangle ,|\lambda _2\rangle ,\dots ,|\lambda _n\rangle \) and let w denote a marked vertex. For an appropriate choice of \(r\in [-\frac{c}{1+c},\frac{c}{1-c}]\), the starting state \(|s\rangle \) evolves by the Schr\(\ddot{o}\)dinger’s equation with the Hamiltonian \((1+r)H +|w\rangle \langle w|\) for time \(t = \Theta (\sqrt{n})\) into the state \(|f\rangle \) satisfying \(|\langle {w}|{f}\rangle |^2\ge \frac{1-c}{1+c}+o(1)\).

According to the proof of the lemma, the bound can be derived by choosing r satisfying

The assumptions from the lemma guarantee the existence of \(r\in [\frac{-c}{1+c},\frac{c}{1-c}]\) satisfying the above equality. Note that the result is constructive for \(c=o(1)\), as in this case \(r=o(1)\) as well as \(t=\frac{\pi \sqrt{n}}{2}\). Otherwise, a proper determination of r and t is needed.

According to the lemma, two properties of \(M_\mathrm{G}\) are useful in proving search optimality. Firstly, the matrix should have a single outlying eigenvalue. Secondly, if \(|\lambda _1\rangle \) is the eigenvector corresponding to the outlying eigenvalue, one should have \( \vert \langle {w}|{\lambda _1}\rangle \vert =\Theta (\frac{1}{\sqrt{n}})\).

Note that in the limit \(n\rightarrow \infty \), norms cease to be equivalent; thus, different concepts of closeness of vectors can be chosen. In [4], authors choose \(1-|\langle {\psi }|{\phi }\rangle |\) for arbitrary vectors \(|\psi \rangle , |\phi \rangle \), which allows to infer that o(n) of nodes can be found in time \(\omega (\sqrt{n})\), see the example given in Eq. (1). In order to make statements concerning all vertices, we should study the limit behavior of the principal vector in \(L^{\infty }\) norm \(\Vert \cdot \Vert _\infty \), which bounds the maximal deviation of coordinates. More precisely, we are interested whether \(\Vert | \lambda _{1}\rangle -|s\rangle \Vert _\infty = \frac{o(1)}{\sqrt{n}}\), as this would imply that for an arbitrary marked node \( w\) we have \( \langle {w}|{\lambda _{1}}\rangle =(1+o(1))\frac{1}{\sqrt{n}}\). The above will give us the bound \(\Theta (\frac{1}{|\langle {w}|{\lambda _1}\rangle |}) = \Theta (\sqrt{n})\) for the time needed for quantum spatial algorithm to locate vertex w.

Indeed, a convergence of infinity norm was shown by Mitra [18] providing \(p\ge \log ^6(n)/n\). We have managed to weaken the assumptions and thereby strengthen the result.

Proposition 1

Suppose A is an adjacency matrix of a random Erdős–Rényi graph \(\mathcal G(n,p)\) with \(p=\omega ( \log ^3(n)/(n\log ^2\log n))\). Let \(|\lambda _{1}\rangle \) denote the eigenvector corresponding to the largest eigenvalue of A and let \(|s\rangle =\frac{1}{\sqrt{n}}\sum _{v}|v\rangle \). Then,

with probability \(1-o(1)\).

The proof, which follows the concept proposed by Mitra [18], can be found in Section A in Supplementary Materials. This implies that all vertices can be found optimally in \(\Theta (\sqrt{n})\) time for almost all graphs.

To show the common time measurement, suppose that the largest eigenvalue of \(\frac{1}{np}A\) satisfies

where \(\delta \rightarrow 0\). Then, the probability of measuring the searched vertex w in time t can be approximated by [4]

Since \(|\lambda _1\rangle \) tends to \(|s\rangle \) in the \(\Vert \cdot \Vert _\infty \) norm, the approximation works for all nodes. Hence, when \(\delta =O(\frac{1}{\sqrt{n}})\) (with small constant in the \(\Theta (\frac{1}{\sqrt{n}})\) case), then all of the vertices can be found in time \(O(\sqrt{n})\). Nevertheless, \(\delta \) depends on a chosen graph, and thus, the measurement time may differ. In order to ensure that the time and probability of measurement are the same for all marked nodes and almost all graph chosen, one should provide \(\delta = o(\frac{1}{\sqrt{n}})\) almost surely.

If \(p=\omega (\log ^8(n)/n)\), then the largest eigenvalue \(\lambda _1\) follows \(\mathcal N\left( 1,\frac{1}{n}\sqrt{2(1-p)/p} \right) \) distribution [19], see Section B in Supplementary Materials for a step-by-step derivation, where \(\mathcal N(\mu ,\sigma )\) is the normal distribution with mean \(\mu \) and standard deviation \(\sigma \). Therefore, one can show that asymptotically almost surely

where \(\delta =o(\frac{1}{n\sqrt{p}})\). Note that since \(np=\omega (\log ^8(n))\), we have actually \(\delta =o(\frac{1}{\sqrt{n}\log ^4(n)})\) in the worst-case scenario. This, in turn, allows us to use the simplified version of Eq. (6)

for large n. Thus, we have that in time \(t=\frac{\pi }{2}\sqrt{n}\), the probability of measurement is optimal, independently on a chosen marked node. Finally, we can conclude our results concerning adjacency matrix with the following theorem.

Theorem 2

Suppose we chose a graph according to Erdős–Rényi \(\mathcal G(n,p)\) model with \(p=\omega (\log ^8(n)/n)\). Then by choosing \(M_\mathrm{G}=\frac{1}{np}A\), where A is an adjacency matrix in Eq. (2), almost surely all vertices can be found with probability \(1-o(1)\) with common measurement time approximately \(t=\pi \sqrt{n}/2\).

3 Element-wise optimality for Laplacian matrix

Similar property holds for a Laplacian matrix L. This is a positive semi-definite matrix, where the dimensionality of null space corresponds to the number of connected components. Based on the results from [20], one can show that for \(p=\omega (\log (n) /n)\) all of the other eigenvalues of \(\frac{L}{np}\) converge to 1, see Section C in Supplementary Materials. At the same time, the eigenvector corresponding to the null space is exactly the equal superposition \(|s\rangle = |\mu _n\rangle = \frac{1}{\sqrt{n}}\sum _{v\in V} |v\rangle \). Thus, since for \(p>(1+\varepsilon )\log (n)/n\) a graph is almost surely connected, the Laplacian matrix takes the form

where \(\mu _i\rightarrow 1\) almost surely for \(1\le i\le n-1\). Here \(\mu _1,\dots ,\mu _{n}\) denote eigenvalues of Laplacian matrix with corresponding eigenvectors \(|\mu _1\rangle ,\dots ,|\mu _n\rangle \).

Note that since the identity matrix corresponds to global phase change only, which is an unmeasurable parameter, we can equivalently choose

Note that the matrix above satisfies the requirements of Lemma 1 from [4], and therefore, all of the vertices can be found optimally with probability \(1-o(1)\). Common time measurement is a direct application of Lemma 1 from [4], since more in-depth proof analysis shows that, under the theorem assumptions, \(t=\pi \sqrt{n} /2\) should be chosen for maximizing the success probability.

The situation changes in the case of \(p=O(\log (n)/n)\). Note that for both adjacency and Laplacian matrices the evolution does not change the probability of measuring isolated vertices. If \(p<(1-\varepsilon )\log (n)/n\), then graphs almost surely contain such vertices, and hence, you actually can hide a vertex in such a graph.

The \(p\sim p_0\log (n)/n\) for a constant \(p_0>1\) is a smooth transition case between hiding and non-hiding cases mentioned before. In this case based on Exercise III.4 from [21], one can show that \(\mu _1 \sim (1-p_0)(W_{0}(\frac{1-p_0}{\mathrm {e}p_0}))^{-1}\log (n)\) and \(\mu _{n-1} \sim (1-p_0)(W_{-1}(\frac{1-p_0}{\mathrm {e}p_0}))^{-1}\log (n)\), where \(W_{0},W_{-1}\) are Lambert W functions, see Section D in Supplementary Materials. Here we use the notation \(f(n)\sim g(n) \iff f(n)-g(n)=o(g(n))\) . In this case, the \(M_\mathrm{G}=\mathrm {I}-\frac{1}{np}L\) does not imply that both \(\mu _1\) and \(\mu _{n-1}\) converge to 1.

Nevertheless, we can still make simple changes in a matrix in order to obtain optimality of the procedure. Let \(a=(1-p_0)(W_{0}(\frac{1-p_0}{\mathrm {e}p_0}))^{-1} \) and \(b=(1-p_0)(W_{-1}(\frac{1-p_0}{\mathrm {e}p_0}))^{-1}\) denote constants corresponding to \(\mu _{1}\) and \(\mu _{n-1}\) limit behavior. Then

again satisfies Lemma 1 from [4] with \(c=\frac{a-b}{2}\). According to Lemma 1, the probability of success after time \(t=\frac{\pi }{2 \sqrt{n}}\) is bounded from below by

The bound converges to 0 when \(p_0\rightarrow 1^+\) and to 1 when \(p_0\rightarrow \infty \) and monotonically changes in \((1,\infty )\), see Fig. 1. Note that this corresponds to the other results. For \(p_0<1\), the probability of measuring all vertices is equal to 0 due to the connectivity issues mentioned before. For \(p_0\rightarrow \infty \), the situation becomes similar to \(p=\omega (\log (n)/n)\), where non-hiding property was already shown. Note, however, that the actual success probability seems to be much higher than the bound, see Fig. 2. Eventually, we conclude all of the results by the following theorems.

The lower bound of success probability of quantum spatial search for \(p=p_0\frac{\log (n)}{n}\) for almost all graph from Erdős–Rényi graph model. The exact formula is \(p_\mathrm {bound}=W_{0}\left( \frac{1-p_0}{\mathrm {e}p_0}\right) /W_{-1}\left( \frac{1-p_0}{\mathrm {e}p_0}\right) \). Note that \(p_{\mathrm {bound}}\rightarrow 0\) as \(p_0\rightarrow 1^+\), where connectivity threshold is achieved. Furthermore, \(p_{\mathrm {bound}}\rightarrow 1\) as \(p_0\rightarrow \infty \)

Theorem 3

Suppose we chose a graph according to Erdős–Rényi \(\mathcal G(n,p)\) model. For \(p=\omega (\log (n)/n)\), by choosing \(M_\mathrm{G}=\frac{1}{np}L\) in Eq. (2), almost surely all vertices can be found with probability \(1-o(1)\) in asymptotic \(\pi \sqrt{n}/2\) time. For \(p\sim p_0\log (n)/n \), by choosing \(M_\mathrm{G} = (1+r)\gamma L\) for some proper r, where \(\gamma \) is defined as in Eq. (11), all vertices can be found in \(\Theta (\sqrt{n})\) time with probability bounded from below by the constant in Eq. (12).

We leave determining proper r and t values as open question.

The figure presents probability bounds for quantum spatial search together with success probability derived from simulation. The red dashed line denotes the limit bound for success probability. The blue error bars denote \(\frac{1-c}{1+c}\) for \(c=\max \{|\lambda _2|,|\lambda _n|\}\) for matrix from Eq. 11 for randomly chosen graph. Black error bars denote the actual success probability. Deviations correspond to the maximal and minimal obtained values. Graphs were chosen according to the \(\mathcal G(n,2\frac{\log (n)}{n})\) model, r were derived according to Eq. 3, and we chose time \(t=\frac{\pi \sqrt{n}}{2}\). Thirty graphs were chosen for each size. One can see that the bound for randomly chosen graph oscillates around the limit value; nonetheless, the true success probability is much higher than the bound

Theorem 4

Suppose we chose a graph according to Erdős–Rényi \(\mathcal G(n,p)\) model with \(p\le (1-\varepsilon )\log (n)/n\), where \(\varepsilon >0\). Then for both adjacency and Laplacian matrices, there exist vertices which cannot be found in o(n) time.

4 Conclusion and discussion

In this work, we prove that all vertices can be found optimally with common measurement time \((\pi \sqrt{n})/2\) for almost all Erdős–Rényi graphs for both adjacency and Laplacian matrices under conditions \(p=\omega (\log ^8(n)/n)\) and \(p\ge (1+\varepsilon )\log (n)/n\), respectively. The proof is based on element-wise ergodicity of the eigenvector corresponding to the outlying eigenvalue of adjacency or Laplacian matrix. While under the mentioned constraint adjacency matrix almost surely achieves success probability \(1-o(1)\), the same probability for Laplacian matrix in the \(p\sim p_0\log (n)/n\) case for some \(p_0>1\) can only be bounded from below by some positive constant. At the same time for \(p<(1-\varepsilon )\log (n)/n\), the property does not hold anymore, since almost surely there exist isolated vertices which need \(\Omega (n)\) time to be found.

While our derivation concerning the Laplacian matrix is nearly complete, since only upper bound for success probability is missing in the \(p=\Theta (\log (n)/n)\) case, in our opinion it is possible to weaken the condition on p for the adjacency matrix. The first key step would be showing that the largest eigenvalue \(\lambda (\frac{1}{np} A)\) follows \(\mathcal N(1,\frac{1}{n}\sqrt{2(1-p)/p)}\) distribution for \(p\ge (1+\varepsilon )\log (n)/n\). Then, since element-wise convergence of principal vector requires \(p=\omega (\log ^3(n)/(n\log ^2\log n))\), the result would be strengthened to the last mentioned constraint. The second step would be the generalization of the mentioned element-wise convergence theorem.

Further interesting generalization of the result would be the analysis of more general random graph models as well. While this proposition has already been stated [4], our results show that in order to prove security of the quantum spatial search, it would be desirable to analyze the limit behavior of the principal vector in the sense of \(\Vert \cdot \Vert _\infty \) norm.

References

Childs, A.M., Goldstone, J.: Spatial search by quantum walk. Phys. Rev. A 70(2), 022314 (2004)

Childs, A.M., Cleve, R., Deotto, E., Farhi, E., Gutmann, S., Spielman, D.A.: Exponential algorithmic speedup by a quantum walk. In: Proceedings of the Thirty-Fifth Annual ACM Symposium on Theory of Computing, pp. 59–68. ACM (2003)

Ambainis, A.: Quantum walks and their algorithmic applications. Int. J. Quantum Inf. 1(04), 507–518 (2003)

Chakraborty, S., Novo, L., Ambainis, A., Omar, Y.: Spatial search by quantum walk is optimal for almost all graphs. Phys. Rev. Lett. 116(10), 100501 (2016)

Paparo, G.D., Martin-Delgado, M.: Google in a quantum network. Sci. Rep. 2, 444 (2012)

Paparo, G.D., Müller, M., Comellas, F., Martin-Delgado, M.A.: Quantum google in a complex network. Sci. Rep. 3, 2773 (2013)

Sánchez-Burillo, E., Duch, J., Gómez-Gardenes, J., Zueco, D.: Quantum navigation and ranking in complex networks. Sci. Rep. 2, 605 (2012)

Mülken, O., Pernice, V., Blumen, A.: Quantum transport on small-world networks: a continuous-time quantum walk approach. Phys. Rev. E 76(5), 051125 (2007)

Mülken, O., Blumen, A.: Continuous-time quantum walks: models for coherent transport on complex networks. Phys. Rep. 502(2), 37–87 (2011)

Roland, J., Cerf, N.J.: Noise resistance of adiabatic quantum computation using random matrix theory. Phys. Rev. A 71(3), 032330 (2005)

Chakraborty, S., Novo, L., Di Giorgio, S., Omar, Y.: Optimal quantum spatial search on random temporal networks. Phys. Rev. Lett. 119, 220503 (2017)

Tulsi, A.: Success criteria for quantum search on graphs. arXiv preprint arXiv:1605.05013 (2016)

Philipp, P., Tarrataca, L., Boettcher, S.: Continuous-time quantum search on balanced trees. Phys. Rev. A 93(3), 032305 (2016)

Wong, T.G.: Spatial search by continuous-time quantum walk with multiple marked vertices. Quantum Inf. Process. 15(4), 1411–1443 (2016)

Wong, T.G., Tarrataca, L., Nahimov, N.: Laplacian versus adjacency matrix in quantum walk search. Quantum Inf. Process. 15(10), 4029–4048 (2016)

Knuth, D.E.: Big omicron and big omega and big theta. ACM Sigact News 8(2), 18–24 (1976)

Erdős, P., Rényi, A.: On the evolution of random graphs. Publ. Math. Inst. Hung. Acad. Sci. 5(1), 17–60 (1960)

Mitra, P.: Entrywise bounds for eigenvectors of random graphs. Electron. J. Comb. 16(1), R131 (2009)

Erdős, L., Knowles, A., Yau, H.T., Yin, J., et al.: Spectral statistics of Erdős-Rényi graphs I: local semicircle law. Ann. Probab. 41(3B), 2279–2375 (2013)

Chung, F., Radcliffe, M.: On the spectra of general random graphs. Electron. J. Comb. 18(1), P215 (2011)

Bollobás, B.: Random graphs. 2001. Cambridge Stud. Adv. Math. (2001)

Bryc, W., Dembo, A., Jiang, T.: Spectral measure of large random Hankel, Markov and Toeplitz matrices. Ann. Probab. 34, 1–38 (2006)

Kolokolnikov, T., Osting, B., Von Brecht, J.: Algebraic connectivity of Erdős–Rényi graphs near the connectivity threshold (2014, preprint). https://www.mathstat.dal.ca/~tkolokol/papers/braxton-james.pdf

Feige, U., Ofek, E.: Spectral techniques applied to sparse random graphs. Random Struct. Algorithms 27(2), 251–275 (2005)

Acknowledgements

Aleksandra Krawiec, Ryszard Kukulski and Zbigniew Puchała acknowledge the support from the National Science Centre, Poland, under Project Number 2016/22/E/ST6/00062. Adam Glos was supported by the National Science Centre under Project Number DEC-2011/03/D/ST6/00413.

Author information

Authors and Affiliations

Corresponding author

Appendices

A Element-wise bound on principal eigenvector

Let \(G_{n,p}\) be a random Erdős–Rényi graph, \(\deg (v)\) be a degree of the vertex \(v \in V\) and A be its adjacency matrix with eigenvalues \(\lambda _1 \ge \lambda _2 \ge \cdots \ge \lambda _n\). Let also \(|\lambda _i\rangle \) be an eigenvector corresponding to the eigenvalue \(\lambda _i\) and \(|s\rangle = \frac{1}{\sqrt{n}}|\mathbf {1}\rangle =\frac{1}{\sqrt{n}} \sum _{i=1}^n |v\rangle \).

Proposition 5

For the probability \(p = \omega \left( \ln ^3(n)/(n\log ^2\log n) \right) \) and some constant \(c>0\) we have

with probability \(1-o(1)\).

Proof

Using [20], we have

with probability \(1-o(1)\). The first inequality was shown in the proof of Theorem 1 while the second and third inequalities come from Theorem 3 in [20]. Note \(\deg (v)\) follows a binomial distribution. Using Lindenberg’s CLT and the fact that the convergence is uniform one can show that

where \(\mathcal X\) is a random variable with standard normal distribution. Let \(A=\lambda _1|\lambda _1\rangle \langle \lambda _1|+\sum _{i\ge 2} \lambda _i|\lambda _i\rangle \langle \lambda _i|\) and \(|s\rangle = \alpha |\lambda _1\rangle +\beta |\lambda _1^\perp \rangle \). Assume that \(|\lambda _1\rangle ,|\lambda _1^\perp \rangle ,|\lambda _i\rangle \) are normed vectors and \(|\lambda _1^\perp \rangle =\sum _{i \ge 2} \gamma _i |\lambda _i\rangle \). By the Perron–Frobenius theorem, we can choose a vector \(|\lambda _1\rangle \) such that \(\langle {v}|{\lambda _1}\rangle \ge 0\) and hence obtain \(\langle {s}|{\lambda _1}\rangle =\alpha >0\). Thus,

With probability \(1-o(1)\), using Eq. (14) we have

and thus since \(\beta ^2=1-\alpha ^2\), then

Eventually, we receive

where the fourth inequality comes from Eq. (15). We know that \(|\deg (v)-np| \le 2 \sqrt{n \ln (n) p(1-p)}\) with probability greater than \(1-\frac{1}{n^2}\). Thus, with probability \(1-\frac{1}{n}\) the above is true for all \(v \in V\) simultaneously. Now, since \(\deg (v)= \langle v| A |\mathbf {1}\rangle \), we have

The lower bound can be estimated as

and similarly the upper bound

Consequently

for all \(v \in V\). Let \(l=c\frac{\ln (n)}{\ln (\sqrt{\frac{np}{\ln (n)}}/4)}\), where \(c=c(n,p) \in [1, 2)\) is chosen to satisfy \(l=\left\lceil \frac{\ln (n)}{\ln (\sqrt{\frac{np}{\ln (n)}}/4)} \right\rceil \). Hence

for all \(v \in V\). On the other hand

Using Eqs. (14, 15) we are able to estimate \(\frac{\lambda _i}{\lambda _1}\) by

Thus

where the last inequality comes from Eq. (21) and \(\Vert \cdot \Vert _2\) denotes the Euclidean norm. By Eqs. (25, 27) we get

for all \(v \in V\) and using Eqs. (21, 29) we eventually obtain

for all \(v \in V\). In order to finish the proof it is necessary to show that

and

We need to estimate how quickly \(d^l\) converges to 1. Using the fact that \(d \rightarrow 1\), it is enough to observe that

and thus

The second term of LHS of Eq. (32) converges to 0 more rapidly than the bound, so it completes the proof for the lower bound. The same thing for the upper bound can be shown analogously. \(\square \)

B Distribution of the largest eigenvalue of adjacency matrix

Theorem 6.2 from [19] considers the distribution of the largest eigenvalue of rescaled adjacency matrix \(\tilde{A} = A/ \sqrt{(1-p)pn}\). They show that as long as \(p>\frac{1}{n}\), then

Furthermore, under another condition \(p=\omega (\log ^8(n)/n)\) we have

in a distribution. This allows us to derive the distribution of the largest eigenvalue of the \(\frac{1}{np} A\) matrix

where \(\mathcal X \rightarrow \mathcal {N}(0,1)\). Hence we have that \(\lambda _{1}(\frac{1}{np} A) \sim \mathcal N(1,\frac{1}{n}\sqrt{\frac{2(1-p)}{p}})\). Note, that under the condition \(p=\omega (\log ^8(n)/n)\), the standard deviation tends to 0. This means that the largest eigenvalue actually tends to the Dirac distribution \(\delta _{x=1}\).

This gives as a bound for \(\lambda _{1}(\frac{1}{np} A)\). Note that

The probability tends to 1 as long as the argument tends to \(\infty \). In order to achieve this, we need to assume \(n\sqrt{p}\delta \rightarrow \infty \) as \(n\rightarrow \infty \). This can be done by choosing \(\delta = o( \frac{1}{n\sqrt{p}})\). Eventually, we have asymptotically almost surely

Note that for \(p=o(1)\) the bound is better than the one used in [4].

C Laplacian matrix spectrum

Algebraic connectivity satisfies \(\mu _{n-1}=np+O(\sqrt{np\log n})\) for \(p=\omega (\log (n)/n)\). Similarly we conclude from results of Bryc et al. [22], that \(\mu _1 \sim np\).

Theorem 6

Let \(L_n\) be a Laplacian matrix of random Erdős–Rényi graph \(\mathcal G(n,p)\), where \(p=\omega (\frac{\log n}{n})\). Then, \(\mu _1=\mu (L) \sim np\).

Proof

By Theorem 1.5 from [22], if \(\tilde{L}\) is a symmetric matrix whose off-diagonal elements have two-point distribution with mean 0 and variance \(p(1-p)\) and \(\tilde{L}_{ii} = \sum _{j \ne i} \tilde{L}_{ij}\). Then,

Note that in the following version p may depend on n. Hence, we can extend the Corollary 1.6 from the same paper.

Let \(L_n= \tilde{L}_n + Y_n\), where \(Y_n\) is a deterministic matrix with \(-p\) on off-diagonal and \((n-1)p\) on diagonal. Note that \(Y_n\) is an expectation of a random Erdős–Rényi Laplacian matrix. \(Y_n\) has a single 0 eigenvalue and all of the others take the form np. By this we have \(\mu (Y_n)= \Vert Y_n\Vert = np\). Then, we have

where the limit comes from the Eq. (41), assuming \(p=\omega (\log n/n)\). Finally \(\frac{\mu (L_n)}{np} \rightarrow 1\). \(\square \)

D The largest eigenvalue of Laplacian matrix near the connectivity threshold

Suppose G is a random graph chosen according to \(\mathcal G(n,p_0\frac{\log (n)}{n})\) distribution, for \(p_0>1\) being a constant. It can be shown that

and

see [21], Exercise III.4. Here \(\delta \) and \(\Delta \) denote, respectively, minimal and maximal degree of the graph. In [23] authors have shown that providing

we have

where \(\mu _{n-1}\) is the second smallest eigenvalue of the Laplacian matrix. In fact, similar behavior can be stated for the largest eigenvalue, i.e., if

we have

While we plan to prove the statement above, it is possible that the RHS can be reduced to \(O(\sqrt{np})\) by following the proof in [23]. Nonetheless, we are satisfied with the mentioned result. The proof is very similar to the proof of Lemma 3.4 in [23]. Furthermore, note, that the theorem holds for \(p_0>0\).

Theorem 7

Suppose there exists a \(p_0>0\) so that \(np\ge p_0\log (n)\) and \(\Delta \sim cnp\) almost surely. Then, almost surely \(\mu _{1} \sim cnp\).

Proof

Note, that since the eigenvector corresponding to 0 eigenvalue is the equal superposition, we have

Note that

by Theorem 2.5 from [24]. Similarly one can show \(\mu _{1 }\ge \Delta \), which can be done by taking maximum over canonical vectors. After combining those bounds and \(\Delta \sim cnp\), we obtain the result. \(\square \)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Glos, A., Krawiec, A., Kukulski, R. et al. Vertices cannot be hidden from quantum spatial search for almost all random graphs. Quantum Inf Process 17, 81 (2018). https://doi.org/10.1007/s11128-018-1844-7

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11128-018-1844-7