Abstract

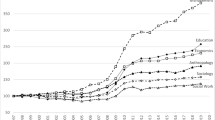

Recently there has been huge growth in the number of articles displayed on the Web of Science (WoS), but it is unclear whether this is linked to a growth of science or simply additional coverage of already existing journals by the database provider. An analysis of the category of journals in the period of 2000–2008 shows that the number of basic journals covered by Web of Science (WoS) steadily decreased, whereas the number of new, recently established journals increased. A rising number of older journals is also covered. These developments imply a crescive number of articles, but a more significant effect is the enlargement of traditional, basic journals in terms of annual articles. All in all it becomes obvious that the data set is quite instable due to high fluctuation caused by the annual selection criteria, the impact factor. In any case, it is important to look at the structures at the level of specific fields in order to differentiate between “real” and “artificial” growth. Our findings suggest that even-though a growth of about 34 % can be measured in article numbers in the period of 2000–2008, 17 % of this growth stems from the inclusion of old journals that have been published for a longer time but were simply not included in the database so far.

Similar content being viewed by others

Notes

Indeed, there seem to be journals in the WoS which have more than one volume number for one year. Even if you assume that journals which started in the middle of a year get a new volume number at the same time during the year henceforth and thus have 2 volume numbers per year, we could identify 5.5 % of jour nals in the data set for this study that had more than 2 volume numbers per year. We think this error rate is negligible in favour of a macro level study. Furthermore, the percentage of such journals is dimini shing: In the year 2000, 6.2 % of the journals in the database have more than 2 volume numbers while in 2008 we find merely 4.0 %. This might hint at a correction by the database provider or an adjust ment/standardization on the publishers’ side.

Of course, using the first appearance in the database of the journal would be exactly the opposite of what we want to measure.

Namely, these are the codes “IH” and “IT” representing the products “Social Sciences and Humanities Proceedings” and “Scientific and Technical Proceedings”.

Such a slicing of articles in order to publish more frequently smaller or less findings is also called “Salami Publishing”, which is discouraged by journals but difficult to detect (Roberts 2009).

We are aware of the fact that there are other factors that determine if a journal might be included in the database or not. But none of these is prone to such a high volatility as the citation rate or the impact factor (Thomson Reuters 2012).

References

Glänzel, W., & Moed, H. F. (2002). Journal impact measures in bibliometric research. Scientometrics, 53, 171–193.

Gupta, B. M., & Dhawan, S. M. (2008). Condensed matter physics: an analysis of India’s research output, 1993–2001. Scientometrics, 75, 123–144.

Gupta, B. M., Sharma, P., & Karisiddappa, C. R. (1997). Growth of research literature in scientific specialities. A modelling perspective. Scientometrics, 40(3), 507–528.

Larsen, P. O., & von Ins, M. (2010). The rate of growth in scientific publication and the decline in coverage provided by science citation index. Scientometrics, 84, 575–603.

Leydesdorff, L., Cozzens, S., & van den Besselaar, P. (1994). Tracking areas of strategic importance using scientometric journal mappings. Research Policy, 23, 217–229.

Mallig, N. (2010). A relational database for bibliometric analysis. Journal of Informetrics, 4(4), 564–580.

National Science Board. (2010). Science and engineering indicators 2010. Arlington, VA: National Science Foundation.

Plerou, V., Nunes Amaral, L. A., Goplkrishnan, P., Meyer, M., & Stanley, H. E. (1999). Similarities between the growth dynamics of university research and of competitive economic activities. Nature, 400(6743), 433–437.

Price, D. J. de Solla (1971). Little science, big science. Frankfurt/M: Suhrkamp.

Roberts, J. (2009). An author’s guide to publication ethics: A review of emerging standards in biomedical journals. Headache, 49(4), 578–589.

Schmoch, U., Michels, C., Neuhäusler, P., & Schulze, N. (2012). Performance and Structures of the German Science System 2011. Germany in international comparison, China’s profile, behaviour of German authors, comparison of Web of Science and SCOPUS. Studien zum deutschen Innovationssystem. Berlin: Expertenkommission Forschun und Innovation.

Seglen, P. O. (1992). The skewness of science. American Society for Information Science Journal, 43, 628–638.

Stehr, N. (1994). Knowledge societies. Thousand Oaks, CA: SAGE Publications.

Thomson Reuters (2012) The Thomson Reuters journal selection process. Accessed 10 March 2012, from http://thomsonreuters.com/products_services/science/free/essays/journal_selection_process/.

Van Raan, A. F. J. (2000). On growth, ageing, and fractal differentiation of science. Scientometrics, 47(2), 347–362.

Acknowledgments

The research underlying this paper was supported by the German Federal Ministry for Education and Research (BMBF, project number 01PQ08004D). Certain data included in this paper are derived from the Science Citation Index Expanded (SCIE), the Social Science Citation Index (SSCI), the Arts and Humanities Citation Index (AHCI), and the Index to Social Sciences & Humanities Proceedings (ISSHP) (all updated June 2010) prepared by Thomson Reuters (Scientific) Inc. (TR®), Philadelphia, Pennsylvania, USA, USA: ©Copyright Thomson Reuters (Scientific) 2010. All rights reserved.

Author information

Authors and Affiliations

Corresponding author

Appendix

Rights and permissions

About this article

Cite this article

Michels, C., Schmoch, U. The growth of science and database coverage. Scientometrics 93, 831–846 (2012). https://doi.org/10.1007/s11192-012-0732-7

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-012-0732-7