Abstract

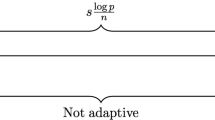

In linear multiple regression, “enhancement” is said to occur when R 2=b′r>r′r, where b is a p×1 vector of standardized regression coefficients and r is a p×1 vector of correlations between a criterion y and a set of standardized regressors, x. When p=1 then b≡r and enhancement cannot occur. When p=2, for all full-rank R xx≠I, R xx=E[xx′]=V Λ V′ (where V Λ V′ denotes the eigen decomposition of R xx; λ 1>λ 2), the set \(\boldsymbol{B}_{1}:=\{\boldsymbol{b}_{i}:R^{2}=\boldsymbol{b}_{i}'\boldsymbol{r}_{i}=\boldsymbol{r}_{i}'\boldsymbol{r}_{i};0<R^{2}\le1\}\) contains four vectors; the set \(\boldsymbol{B}_{2}:=\{\boldsymbol{b}_{i}: R^{2}=\boldsymbol{b}_{i}'\boldsymbol{r}_{i}>\boldsymbol{r}_{i}'\boldsymbol{r}_{i}\); \(0<R^{2}\le1;R^{2}\lambda_{p}\leq\boldsymbol{r}_{i}'\boldsymbol{r}_{i}<R^{2}\}\) contains an infinite number of vectors. When p≥3 (and λ 1>λ 2>⋯>λ p ), both sets contain an uncountably infinite number of vectors. Geometrical arguments demonstrate that B 1 occurs at the intersection of two hyper-ellipsoids in ℝp. Equations are provided for populating the sets B 1 and B 2 and for demonstrating that maximum enhancement occurs when b is collinear with the eigenvector that is associated with λ p (the smallest eigenvalue of the predictor correlation matrix). These equations are used to illustrate the logic and the underlying geometry of enhancement in population, multiple-regression models. R code for simulating population regression models that exhibit enhancement of any degree and any number of predictors is included in Appendices A and B.

Similar content being viewed by others

References

Bertrand, P.V. (1998). Constructing explained and explanatory variables with strange statistical analysis results. The Statistician, 47, 377–383.

Bertrand, P.V., & Holder, R.L. (1988). A quirk in multiple-regression: the whole regression can be greater than the sum of its parts. The Statistician, 37(4), 371–374.

Briel, J.B., O’Neill, K., & Scheunernan, J.D. (1993). GRE technical manual. Princeton: Educational Testing Service.

Bryant, P. (1982). Geometry, statistics, probability: variations on a common theme. The American Statistician, 38(1), 38–48.

Conger, A.J. (1974). A revised definition for suppressor variables: a guide to their identification and interpretation. Educational and Psychological Measurement, 34(1), 35–46.

Cox, D.R. (1968). Notes on some aspects of regression analysis. Journal of the Royal Statistical Society, Series A, 131, 265–279.

Crocker, L., & Algina, J. (1986). Introduction to classical and modern test theory. New York: Holt, Rinehart, and Winston.

Cuadras, C.M. (1993). Interpreting an inequality in multiple regression. The American Statistician, 47(4), 256–258.

Currie, I., & Korabinski, A. (1984). Some comments on bivariate regression. The Statistician, 33(3), 283–292.

Dayton, C.M. (1972). A method for constructing data which illustrate a suppressor variable. The American Statistician, 26(5), 36.

Derksen, S., & Keselman, H.J. (1992). Backward, forward and stepwise automated subset selection algorithms: frequency of obtaining authentic and noise variables. British Journal of Mathematical and Statistical Psychology, 45(2), 265–282.

Dicken, C. (1963). Good impression, social desirability, and acquiescence as suppressor variables. Educational and Psychological Measurement, 23(4), 699–720.

Ferguson, C.C. (1979). Intersections of ellipsoids and planes of arbitrary orientation and position. Mathematical Geology, 11(3), 329–33.

Freund, R.J. (1988). When \(R^{2}>r_{yx_{1}}^{2}+r_{yx_{2}}^{2}\) (revisited). The American Statistician, 42(1), 89–90.

Friedman, L., & Wall, M. (2005). Graphical views of suppression and multicollinearity in multiple linear regression. The American Statistician, 59(2), 127–136.

Graybill, F.A. (1983). Matrices with applications in statistics (2nd ed.). London: Thomson Learning.

Gromping, U. (2007). Estimators of relative importance in linear regression based on variance decomposition. The American Statistician, 61(2), 139–147.

Hadi, A.S. (1996). Matrix algebra as a tool. Belmont: Duxbury Press.

Hamilton, D. (1987). Sometimes \(R^{2}>r_{yx_{1}}^{2}+r_{yx_{2}}^{2}\): correlated variables are not always redundant. The American Statistician, 41(2), 129–132.

Hamilton, D. (1988). Reply. The American Statistician, 42, 90–91.

Hawkins, D.M., & Fatti, L.P. (1984). Exploring multivariate data using the minor principal components. The Statistician, 33(4), 325–338.

Holling, H. (1983). Suppressor structures in the general linear model. Educational and Psychological Measurement, 43(1), 1–9.

Horst, P. (1941). The prediction of personal adjustment (Bulletin No. 48). New York: Social Science Research Council.

Hotelling, H. (1957). The relationship of the newer statistical multivariate statistical methods to factor analysis. British Journal of Statistical Psychology, 10(2), 69–79.

Kendall, M.G., & Stuart, A. (1973). The advanced theory of statistics (3rd edn.). London: Charles Griffin and Company.

Kuncel, N.R., Hezlett, S.A., & Ones, D.S. (2001). A comprehensive meta-analysis of the predictive validity of the graduate record examinations: implications for graduate student selection and performance. Psychological Bulletin, 127(1), 162–181.

Lewis, J.W., & Escobar, L.A. (1986). Suppression and enhancement in bivariate regression. The Statistician, 35(1), 17–26.

Lipovetsky, S., & Conklin, M. (2004). Enhance-synergism and suppression effects in multiple regression. International Journal of Mathematics Education in Science and Technology, 35(3), 391–402.

Magnus, J.R., & Neudecker, H. (1999). Matrix differential calculus with applications in statistics and econometrics. New York: Wiley.

Marsaglia, G., & Olkin, I. (1984). Generating correlation-matrices. SIAM Journal on Scientific and Statistical Computing, 5(2), 470–475.

Maassen, G.H., & Bakker, A.B. (2001). Suppressor variables in path models: definitions and interpretations. Sociological Methods & Research, 30(2), 241–270.

Meehl, P.E. (1945). A simple algebraic development of Horst’s suppressor variables. The American Journal of Psychology, 58(4), 550–554.

Mitra, S. (1988). The relationship between the multiple and the zero-order correlation coefficients. The American Statistician, 42(1), 89.

Nickerson, C. (2008). Mutual suppression: comment on Paulhus et al. (2004). Multivariate Behavioral Research, 43(4), 556–563.

Paulhus, D.L., Robins, R.W., Trzesniewski, K.H., & Tracy, J.L. (2004). Two replicable suppressor situations in personality research. Multivariate Behavioral Research, 39(2), 301–326.

R Development Core Team (2011). R: A language and environment for statistical computing. Vienna: R Foundation for Statistical Computing. ISBN 3-900051-07-0, URL http://www.R-project.org/.

Rao, C., & Mitra, S. (1971). Generalized inverse of the matrix and its applications. New York: Wiley.

Routledge, R.D. (1990). When stepwise regression fails: correlated variables some of which are redundant. International Journal of Mathematical Education in Science and Technology, 21(3), 403–410.

Saville, D.J., & Wood, G.R. (1986). A method for teaching statistics using N-dimensional geometry. The American Statistician, 40, 205–214.

Schey, H.M. (1993). The relationship between the magnitude of SSR(x2) and SSR(x2|x1): a geometric description. The American Statistician, 47(1), 26–30.

Schmidt, F., & Hunter, J.E. (1996). Measurement error in psychological research: lessons from 26 research scenarios. Psychological Methods, 1(2), 199–223.

Sharpe, N., & Roberts, R. (1997). The relationship among sums of squares, correlation coefficients, and suppression. The American Statistician, 51(1), 46–48.

Shieh, G. (2001). The inequality between the coefficient of determination and the sum of squared simple correlation coefficients. The American Statistician, 55(2), 121–124.

Taleb, N.N. (2007). The black swan: the impact of the highly improbable. New York: Random House.

Tzelgov, J., & Henik, A. (1985). A definition of suppression situations for the general linear model: a regression weights approach. Educational and Psychological Measurement, 45(2), 281–284.

Tzelgov, J., & Henik, A. (1991). Suppression situations in psychological research: definitions, implications, and applications. Psychological Bulletin, 109(3), 524–536.

Waller, N., & Jones, J. (2010). Correlation weights in multiple regression. Psychometrika, 75(1), 58–69.

Wickens, T. (1995). The geometry of multivariate statistics. Mahwah: Lawrence Erlbaum Associates.

Wiggins, J.S. (1973). Personality and prediction: principles of personality assessment. New York: Addison-Wesley.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Waller, N.G. The Geometry of Enhancement in Multiple Regression. Psychometrika 76, 634–649 (2011). https://doi.org/10.1007/s11336-011-9220-x

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11336-011-9220-x