Abstract

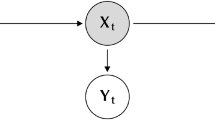

We study Markov models whose state spaces arise from the Cartesian product of two or more discrete random variables. We show how to parameterize the transition matrices of these models as a convex combination—or mixture—of simpler dynamical models. The parameters in these models admit a simple probabilistic interpretation and can be fitted iteratively by an Expectation-Maximization (EM) procedure. We derive a set of generalized Baum-Welch updates for factorial hidden Markov models that make use of this parameterization. We also describe a simple iterative procedure for approximately computing the statistics of the hidden states. Throughout, we give examples where mixed memory models provide a useful representation of complex stochastic processes.

Article PDF

Similar content being viewed by others

References

Baldi, P., & Chauvin, Y. (1996). Hybrid modeling, HMM/NN architectures, and protein applications. Neural Computation, 8, 1541–1565.

Baum, L. (1972). An inequality and associated maximization technique in statistical estimation for probabilistic functions of a Markov process. In O. Shisha (Ed.), Inequalities (Vol. 3, pp. 1–8). New York: Academic Press.

Bestavros, A., & Cunha, C. (1995). A prefetching protocol using client speculation for the WWW. (Technical Report TR–95–011). Boston, MA: Boston University, Department of Computer Science.

Binder, J., Koller, D., Russell, S., & Kanazawa, K. (1997). Adaptive probabilistic networks with hidden variables. Machine Learning, 29, 213–244.

Bourland, H., & Dupont, S. (1996). A new ASR approach based on independent processing and recombination of partial frequency bands. In H. Bunnell, & W. Idsardi (Eds.), Proceedings of the Fourth International Conference on Speech and Language Processing (pp. 426–429). Newcastle, DE: Citation Delaware.

Bregler, C., & Omohundro, S. (1995). Nonlinear manifold learning for visual speech recognition. In E. Grimson (Ed.), Proceedings of the Fifth International Conference on Computer Vision (pp. 494–499). Los Alamitos, CA: IEEE Computer Society Press.

Chen, S., & Goodman, J. (1996). An empirical study of smoothing techniques for language modeling. Proceedings of the Thirty Fourth Annual Meeting of the Association for Computational Linguistics (pp. 310–318). San Francisco, CA: Morgan Kaufmann.

Cunha, C., Bestavros, A., & Crovella, M. (1995). Characteristics of WWW client-based traces. (Technical Report TR–95–010). Boston, MA: Boston University, Department of Computer Science.

Dean, T., & Kanazawa, K. (1989). A model for reasoning about persistence and causation. Computational Intelligence, 5(3), 142–150.

Dempster, A., Laird, N., & Rubin, D. (1977). Maximum likelihood from incomplete data via the EM algorithm. Journal of the Royal Statistical Society B, 39, 1–38.

Dirst, M., & Weigend, A. (1993). Baroque forecasting: on completing J. S. Bach 's last fugue. In A. Weigend, & N. Gershenfeld (Eds.), Time series prediction: Forecasting the future and understanding the past. Reading, MA: Addison-Wesley.

Ghahramani, Z., & Jordan, M. (1997). Factorial hidden Markov models. Machine Learning, 29, 245–273.

Haussler, D., Krogh, A., Mian, I., & Sjolander,K. (1993). Protein modeling using hidden Markov models: Analysis of globins. Proceedings of the Hawaii International Conference on System Sciences (Vol. 1, pp. 792–802). Los Alamitos, CA: IEEE Computer Society Press.

MacDonald, I., & Zucchini,W. (1997). Hidden Markov and other models for discrete-valued time series. Chapman and Hall.

Nadas, A. (1984). Estimation of probabilities in the language model of the IBM speech recognition system. IEEE Transactions on Acoustics, Speech, and Signal Processing, 32(4), 859–861.

Ney, H., Essen, U., & Kneser, R. (1994). On structuring probabilistic dependences in stochastic language modeling. Computer Speech and Language, 8, 1–38.

Rabiner, L. (1989). A tutorial on hidden Markov models and selected applications in speech recognition. Proceedings of the IEEE, 77(2), 257–286.

Raftery, A. (1985). A model for high-order Markov chains. Journal of the Royal Statistical Society B, 47, 528–539.

Ron, D., Singer, Y., & Tishby, N. (1996). The power of amnesia: Learning probabilistic automata with variable memory length. Machine Learning, 25, 117–150.

Saul, L., & Jordan, M. (1996). Exploiting tractable substructures in intractable networks. In D. Touretzky, M. Mozer, & M. Hasselmo (Eds.), Advances in neural information processing systems (Vol. 8, pp. 486–492). Cambridge, MA: MIT Press.

Saul, L., & Pereira, F. (1997). Aggregate and mixed-order Markov models for statistical language processing. In C. Cardie, & R. Weischedel (Eds.), Proceedings of the Second Conference on Empirical Methods in Natural Language Processing (pp. 81–89). Somerset, NJ: ACL Press.

Williams, C., & Hinton, G. (1990) Mean field networks that learn to discriminate temporally distorted strings. In D. Touretzky, J. Elman, T. Sejnowski, & G. Hinton (Eds.), Connectionist Models: Proceedings of the 1990 Summer School (pp. 18–22). San Francisco, CA: Morgan Kaufmann.

Zeevi, A., Meir, R., & Adler, R. (1997). Time series prediction using mixtures of experts. In M. Mozer, M. Jordan, & T. Petsche (Eds.), Advances in neural information processing systems (Vol. 9, pp. 309–315). Cambridge, MA: MIT Press.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Saul, L.K., Jordan, M.I. Mixed Memory Markov Models: Decomposing Complex Stochastic Processes as Mixtures of Simpler Ones. Machine Learning 37, 75–87 (1999). https://doi.org/10.1023/A:1007649326333

Issue Date:

DOI: https://doi.org/10.1023/A:1007649326333