Abstract

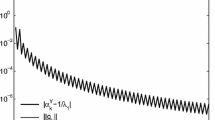

This paper presents a novel framework for developing globally convergent algorithms without evaluating the value of a given function. One way to implement such a framework for a twice continuously differentiable function is to apply linear bounding functions (LBFs) to its gradient function. The algorithm thus obtained can get a better point in each iteration without using a line search. Under certain conditions, it can achieve at least superlinear convergent rate 1.618 without calculating explicitly the Hessian. Furthermore, the strategy of switching from the negative gradient direction to the Newton-alike direction is derived in a natural manner and is computationally effective. Numerical examples are used to show how the algorithm works.

Similar content being viewed by others

References

Luenberger, D. G., Linear and Nonlinear Programming, 2nd Edition, Academic Press, Reading, Massachusetts, 1984.

Polak, E., Notes for Fundamentals of Optimization for Engineers, Memorandum No. UCB/ERL M89/40, University of California at Berkeley, Berkeley, California, 1989.

MorÉ, J. J., and Sorensen, D. C., Computing a Trust Region Step, SIAM Journal on Scientific and Statistical Computing, Vol. 4, pp. 553–572, 1983.

Sorensen, D. C., Newton's Method with a Model Trust Region Modification, SIAM Journal on Numerical Analysis, Vol. 19, pp. 409–426, 1982.

Chang, T. S., and Wang, W., A Framework for Local Minimization Using Upper Bounding Functions: An Illustration via a Globally Convergent Multivariate Algorithm and Univariate Minimization, Working Paper, 1998.

Wang, X., and Chang, T. S., An Improved Univariate Global Optimization Algorithm with Improved Linear Bounding Functions, Journal of Global Optimization, Vol. 8, pp. 393–411, 1996.

Wang, X., and Chang, T. S., A Multivariate Global Optimization Algorithm Using Linear Bounding Functions, Journal of Global Optimization, Vol. 12, pp. 383–404, 1998.

Steinberg, D. I., Computational Matrix Algebra, MacGraw-Hill, New York, New York, 1974.

Tseng, C. L., and Chang, T. S., A Globaly Convergent Newton-Type Univariate Minimization Algorithm and Its Extension, Technical Report UCD-ECE-SCR-94/5, University of California at Davis, Davis, Caifornia, 1994.

Atkinson, K. E., An Introduction to Numerical Analysis, 2nd Edition, John Wiley and Sons, New York, New York, 1989.

Bongartz, I., Conn, A. R., Gould, N., and Toint, P. L., CUTE: Constrained and Unconstrained Testing Environment, ACM Transactions on Mathematical Software, Vol. 21, pp. 123–160, 1995.

Torn, A., and Zilinskas, A., Global Optimization, Springer Verlag, Berlin, Germany, 1989.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Wang, X., Chang, T.S. A Framework for Globally Convergent Algorithms Using Gradient Bounding Functions. Journal of Optimization Theory and Applications 100, 661–697 (1999). https://doi.org/10.1023/A:1022694608370

Issue Date:

DOI: https://doi.org/10.1023/A:1022694608370