Abstract

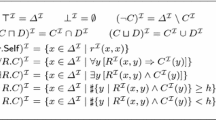

This paper introduces a new framework for constructing learning algorithms. Our methods involve master algorithms which use learning algorithms for intersection-closed concept classes as subroutines. For example, we give a master algorithm capable of learning any concept class whose members can be expressed as nested differences (for example, c 1 – (c 2 – (c 3 – (c 4 – c 5)))) of concepts from an intersection-closed class. We show that our algorithms are optimal or nearly optimal with respect to several different criteria. These criteria include: the number of examples needed to produce a good hypothesis with high confidence, the worst case total number of mistakes made, and the expected number of mistakes made in the first t trials.

Article PDF

Similar content being viewed by others

References

Blumer, A., Ehrenfeucht, A., Haussler, D., and Warmuth, M.K. (1989). Learnability and the Vapnik-Chervonenkis dimension. Journal of the ACM, 36, 929-965.

Board, R. and Pitt, L. (1990). On the necessity of Occam algorithms. Proceedings of the Twenly-Secnd Annual ACM Symposium on Theory of Computing.

Boucheron, S. (1988). Learnability from positive examples in the Valiant framework. Unpublished manuscript.

Dudley, R.M. (1984). A course on empirical processes. Lecture Notes in Mathematics No.1097. New York: Springer-Verlag.

Ehrenfeucht, A., Haussler, D., Kearns, M., and Valiant, L. (1989). A general lower bound on the number of examples needed for learning. Information and Computation, 82, 247-261.

Haussler, D. (1989). Learning conjunctive concepts in structural domains. Machine Learning, 4, 7-40.

Haussler, D., Kearns, M., Littlestone, N., and Warmuth, M.K. (1990). Equivalence of models for polynomial learnability. Information and Computation. To appear.

Haussler, D., Littlestone, N., and Warmuth, M.K. (1988). Predicting {0, l}-functions on randomly drawn points. Proceedings of the 29th Annual Symposium on Foundations of Computer Science, pp.100-109. White Plains, NY: IEEE. Tech. Report, U.C. Santa Cruz. To appear (longer version).

Haussler, D. and Welzl, E. (1987). Epsilon-nets and simplex range queries. Discrete Computational Geometry, 2,127-151.

Helmbold, D., Sloan, R., and Warmuth, M.K. (1989a). Learning lattices and reversible, commutative regular languages. (Technical Report UCSC-CRL-89-23). Santa Cruz, CA: U.C. Santa Cruz, Computer Research Laboratory.

Helmbold, D., Sloan, R., and Warmuth, M.K. (1989b). Learning nested differences of intersection-closed concept classes. (Technical Report UCSC-CRL-89-19) Santa Cruz, CA: U.C. Santa Cruz, Computer Research Laboratory.

Helmbold, D., Sloan, R., and Warmuth, M.K. (1989c). Learning nested differences of intersection-closed concept classes. Proceedings of the Second Workshop on Computational Learning Theory (pp.41-56). Santa Cruz, CA: Morgan Kaufmann.

Kearns, M., Li, M., Pitt, L., and Valiant, L. (1987). On the learnability of boolean formulae. Proceedings of the Nineteenth Annual ACM Symposium on Theory of Computing (pp.285-295). New York.

Kearns, M. and Valiant, L.G. (1989). Cryptographic limitations on learning boolean formulae and finite automata. Proceedings of the Twenty-First Annual ACM Symposium on Theory of Computing (pp.433-444). Seattle, Washington.

Littlestone, N. (1988). Learning when irrelevant attributes abound: A new linear-threshold algorithm. Machine Learning, 2, 285-318.

Littlestone, N. (1989). From on-line to batch learning. Proceedings of the Second Workshop on Computational Learning Theory (pp.269-284). Santa Cruz, CA: Morgan Kaufmann.

Littlestone, N. and Warmuth, M.K. (1989). The weighted majority algorithm (Technical Report UCSC-CRL-89-19). Santa Cruz, CA: U.C. Santa Cruz, Computer Research Laboratory. An extended abstract is available in Proceedings of the 30th Annual Symposium on Foundations of Computer Science (pp.256-261). Research Triangle, NC: October 1989.

Natarajan, B.K. (1987). On learning boolean functions. Proceedings of the Nineteenth Annual ACM Symposium on Theory of Computing (pp.296-304). New York.

Pearl, J. (1978). On the connection between the complexity and credibility of inferred models. Journal of General Systems, 4, 255-264.

Pitt, L. and Valiant, L.G. (1988). Computational limitations on learning from examples. Journal of the ACM, 35, 965-984.

Pitt, L. and Warmuth, M.K. (1990a). The minimum consistent DFA problem cannot be approximated within any polynomial. Journal of the ACM. To appear.

Pitt, L. and Warmuth, M.K. (1990b). Prediction preserving reducibility. Journal of Computer and System Sciences, To appear in special issue consisting of papers from the third annual IEEE Conference on Structures in Complexity Theory,1988.

Rivest, R.L. (1987). Learning decision lists. Machine Learning, 2, 229-246.

Salzberg, S. (1988). Exemplar-based learning: theory and implementation. (Technical Report TR-10-88) Cambridge, MA: Harvard University, Center for Research in Computing Technology.

Shvaytser, H. (1988). Linear manifolds are learnable from positive examples. Unpublished manuscript.

Valiant, L.G. (1984). A theory of the learnable. Communications of the ACM, 27, 1134-1142.

Vapnik, V.N. (1982). Estimation of Dependences Based on Empirical Data. New York: Springer-Verlag.

Vapnik, V.N. and Chervonenkis, A.Y. (1971). On the uniform convergence of relative frequencies of events to their probabilities. Theory of Probability and its Applications, 16, 264-280.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Helmbold, D., Sloan, R. & Warmuth, M.K. Learning Nested Differences of Intersection-Closed Concept Classes. Machine Learning 5, 165–196 (1990). https://doi.org/10.1023/A:1022696716689

Issue Date:

DOI: https://doi.org/10.1023/A:1022696716689